There is no shortage of places they could have been more upfront about the limitations of study.

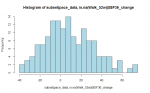

These are the numbers I get for missing 52w walk scores

Code:

APT CBT GET SMC

Missing 48 38 50 42

Out of 159 161 160 160

I get about the same. The particular comparison I was concerned about is the GET versus SMC, since if I remember rightly, GET was the group that had a slightly better average distance walked at 52 weeks. This was used to justify the claim that GET is effective. If there were some way of taking into account that more people failed to do the walk in GET than in SMC, this would, I suggest, wipe out that small difference.

Here are a few approximate counts I made comparing these groups for numbers who walked 50 or more metres less than at the start, and numbers who didn't do the second walk, and combining these to show how many did significantly worse:

APT CBT GET SMC

50m less: 18 18 10 17

No walk: 47 38 49 39

Total : 65 56 59 56

I suspect these between group differences on this measure are insignificant statistically. The APT group may be deemed slightly worse, but given that they've spent a year being told to do 70% of their base activity level, that's hardly surprising.

What they do show (to me) is that about 35% to 40% of participants in all groups got significantly worse between the particular days they did the walking test at the start of the trial and the day they did, or were unable to do, the walking test after a year. If that doesn't show the biopsychosocial model is a dead duck, I don't know what does!

What this particular measure doesn't show, of course, is whether, as a result of pushing themselves to do this walk, many of the participants suffered PEM afterwards. But then that opens up the whole hornets nest of the invalidity of all measures used in the trial. And this is not the place for that discussion.