Esther12

Senior Member

- Messages

- 13,774

I've now edited this first post to include some of the points made by others too.

http://www.kcl.ac.uk/content/1/c6/01/47/68/EBVRCT.pdf

edit: seems offline there, try here: https://docs.google.com/viewer?a=v&...7jV2DO&sig=AHIEtbQH6DoJAJZL7zayQ5eotg0DJoc_Kw

A randomised controlled trial of a psycho-educational

intervention to aid recovery in infectious mononucleosis

Bridget Candy, Trudie Chalder, Anthony J Cleare,

Simon Wessely, Matthew Hotopf*

I've seen this study often mentioned as evidence that it's how patients respond to their illness, rather than the infection itself which is most important in determining levels of long-term disability.

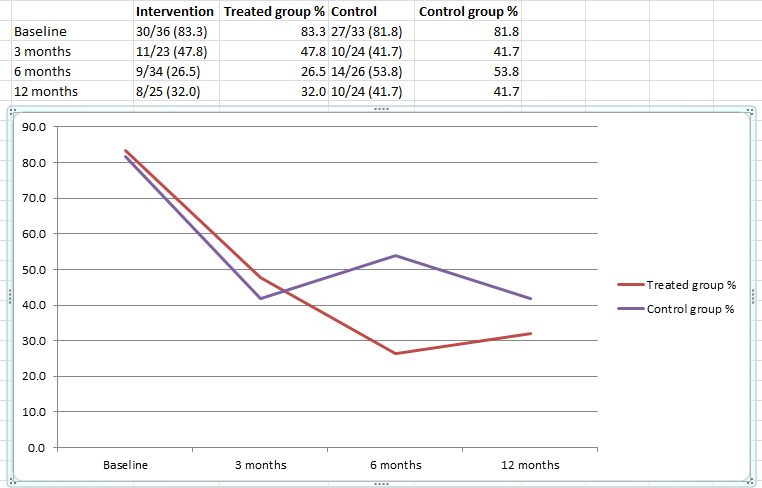

It's a bit of a rubbish design, with only the intervention group getting therapist time, but the control group getting a leaflet. I've seen these results being promoted as if they were really dramatic, but if you look at the differences, much of it could be explained by those whose fatigue improved in the 'intervention group' being more willing to fill in questionnaires at six months than the 'control group'. At 12 months, when both groups have more similar rates of return, the level of fatigue reported are pretty similar:

Considering this was not well controlled, and at 12 months there was no statistical difference between the levels of fatigue reported between the two groups, I think it would be fair to laugh at anyone trying to present this study as really compelling evidence for anything.

They actually mentioned this problem in the paper:

I know Peter White strangely forgot to mention those problems when he discussed this study from around fourteen minutes in here: http://www.scivee.tv/node/6895

White also cites this study in his presentation for the Gibson Parliamentary Group that was looking in to the research around ME/CFS, and reported concerns about the links between the insurance industry and researchers (White being a prominent example of this). I wonder if their report would have been harsher had they not been misled about the value of psychosocial interventions:

www.erythos.com/gibsonenquiry/Docs/White.ppt

Seems a bit misleading to claim "Educational intervention, based on graded return to activity, halved the incidence of prolonged fatigue" considering that there was no statistical difference between the two groups at twelve months.

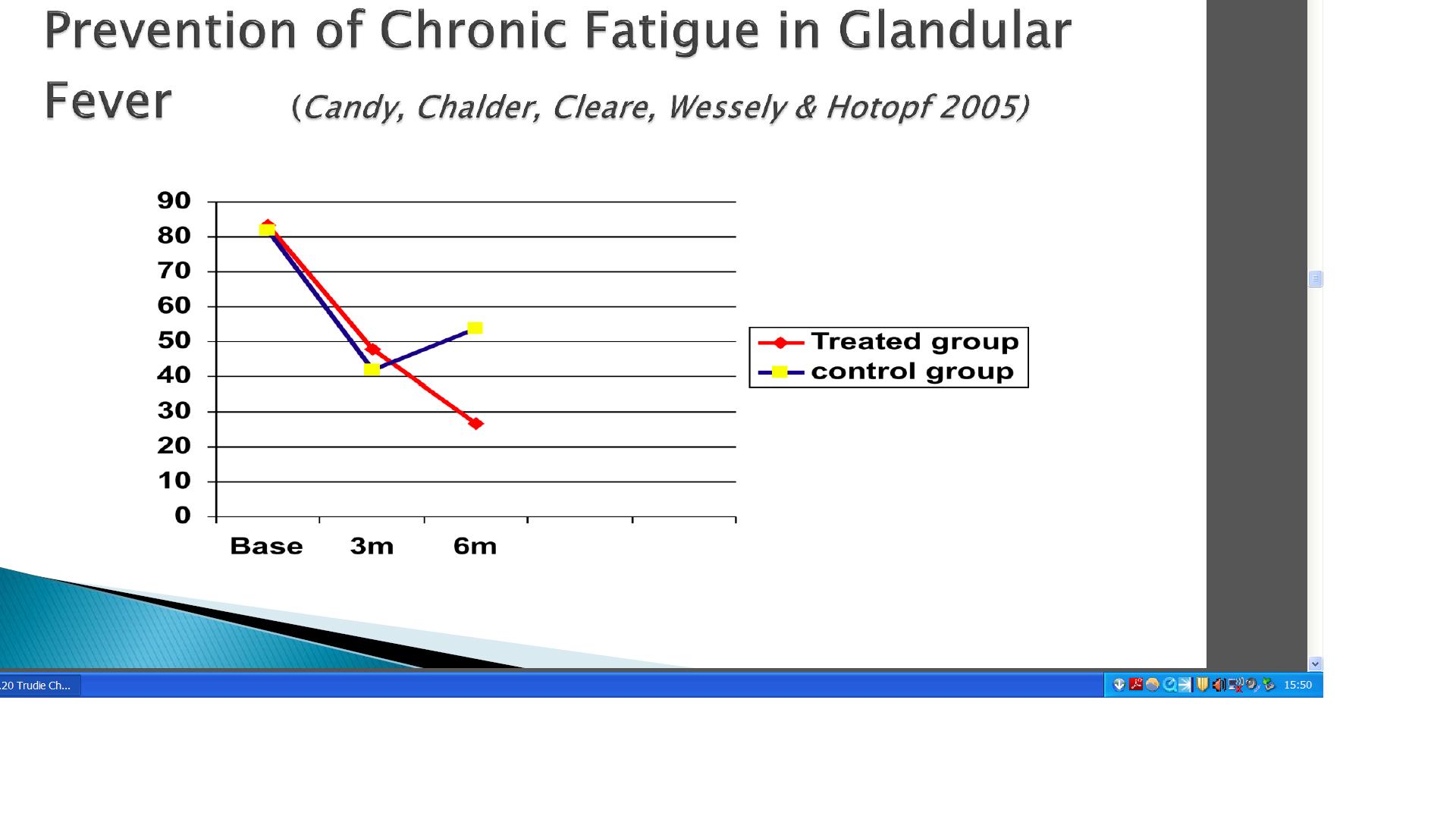

Chalder also cites this paper in this presentation here: http://www.mental-health-forum.co.uk/assets/files/11.20 Trudie Chalder FINAL 169FORMAT.pdf

Strangely her graph does not include the data from 12 months in. Purple did a more complete graph:

More useful, but rather less impressive.

That last Chalder presentation was from 2012. That this trial with 36 people in the therapy group which found no statistical difference between the group receiving therapist time and the group who just got a leaflet, and it is still being used by them to sell their expertise a decade after it was completed is indicative of the quality of evidence they have to support their claims.

It is even possible for the control group to be viewed as a nocebo:

If they theorise that fear related to viral infection is a significant factor in CFS, they could have expected such a leaflet to have a negative affect (depending upon what exactly the leaflet said).

So many of their results just look like homeopathy to me - act nice to patients and get slightly better questionnaire results because i) those who are feeling better are more likely to feel grateful and so complete their forms and ii) people tend to try to be positive about those who they think have tried to help them.

And people wonder why patients don't trust White and Chalder to present the data from the PACE trial in a fair and reasonable manner.

PS: The more stuff I read from around the time I got ill, the more pissed I get at the poor evidence base for the advice I was given. If they were that ignorant as to what I should be doing, they should have just been honest about it and left me to do whatever I thought was best instead of incompetently managing the psychosocial setting of my illness and promoting 'positive' cognitions - bloody bastards.

PPS: In post #7 I post a link to a Chalder presentation where she has a graph for the data from this study, but has removed the data for after 6 months! It's so annoying to think that people are just going to trust her without looking things up.

http://www.kcl.ac.uk/content/1/c6/01/47/68/EBVRCT.pdf

edit: seems offline there, try here: https://docs.google.com/viewer?a=v&...7jV2DO&sig=AHIEtbQH6DoJAJZL7zayQ5eotg0DJoc_Kw

A randomised controlled trial of a psycho-educational

intervention to aid recovery in infectious mononucleosis

Bridget Candy, Trudie Chalder, Anthony J Cleare,

Simon Wessely, Matthew Hotopf*

I've seen this study often mentioned as evidence that it's how patients respond to their illness, rather than the infection itself which is most important in determining levels of long-term disability.

It's a bit of a rubbish design, with only the intervention group getting therapist time, but the control group getting a leaflet. I've seen these results being promoted as if they were really dramatic, but if you look at the differences, much of it could be explained by those whose fatigue improved in the 'intervention group' being more willing to fill in questionnaires at six months than the 'control group'. At 12 months, when both groups have more similar rates of return, the level of fatigue reported are pretty similar:

At 12 months, differences between

groups were more modest, and not statistically significant.

This partly reflects reduced statistical power due to incomplete

follow up. It also might reflect the natural history of

IM related fatigue.

Considering this was not well controlled, and at 12 months there was no statistical difference between the levels of fatigue reported between the two groups, I think it would be fair to laugh at anyone trying to present this study as really compelling evidence for anything.

They actually mentioned this problem in the paper:

This was a small study, and the estimate of treatment effect

was imprecise. The follow-up rates were acceptable, but there

were more incomplete data for the control group at 6 months.

Unequal follow-up rates may explain the more modest differences

between the intervention and control groups when

methods are used to take account of missing data. Those

who failed to complete questionnaires at follow up had fewer

symptoms at baseline, and dropped out of the trial.

I know Peter White strangely forgot to mention those problems when he discussed this study from around fourteen minutes in here: http://www.scivee.tv/node/6895

[Transcript] So let me just move on to an important question about prevention. Just one slide on this. This was a study done by a group of people at Kings. Where they just, a small study, looked at 69 patients with acute IM in a RCT comparing a brief rehabilitation of a nurse going in and giving them advice about getting back to normal activity in a safe and gradual way, compared with being given a leaflet about what mono does to you. And they found that those who had the brief intervention were half as likely, half as likely, a huge effect size, of having prolonged fatigue six months later.[/I]

White also cites this study in his presentation for the Gibson Parliamentary Group that was looking in to the research around ME/CFS, and reported concerns about the links between the insurance industry and researchers (White being a prominent example of this). I wonder if their report would have been harsher had they not been misled about the value of psychosocial interventions:

www.erythos.com/gibsonenquiry/Docs/White.ppt

Seems a bit misleading to claim "Educational intervention, based on graded return to activity, halved the incidence of prolonged fatigue" considering that there was no statistical difference between the two groups at twelve months.

Chalder also cites this paper in this presentation here: http://www.mental-health-forum.co.uk/assets/files/11.20 Trudie Chalder FINAL 169FORMAT.pdf

Strangely her graph does not include the data from 12 months in. Purple did a more complete graph:

More useful, but rather less impressive.

That last Chalder presentation was from 2012. That this trial with 36 people in the therapy group which found no statistical difference between the group receiving therapist time and the group who just got a leaflet, and it is still being used by them to sell their expertise a decade after it was completed is indicative of the quality of evidence they have to support their claims.

It is even possible for the control group to be viewed as a nocebo:

The control group received a standardised fact-sheet about

infectious mononucleosis, which gave no advice on rehabilitation

If they theorise that fear related to viral infection is a significant factor in CFS, they could have expected such a leaflet to have a negative affect (depending upon what exactly the leaflet said).

So many of their results just look like homeopathy to me - act nice to patients and get slightly better questionnaire results because i) those who are feeling better are more likely to feel grateful and so complete their forms and ii) people tend to try to be positive about those who they think have tried to help them.

And people wonder why patients don't trust White and Chalder to present the data from the PACE trial in a fair and reasonable manner.

PS: The more stuff I read from around the time I got ill, the more pissed I get at the poor evidence base for the advice I was given. If they were that ignorant as to what I should be doing, they should have just been honest about it and left me to do whatever I thought was best instead of incompetently managing the psychosocial setting of my illness and promoting 'positive' cognitions - bloody bastards.

PPS: In post #7 I post a link to a Chalder presentation where she has a graph for the data from this study, but has removed the data for after 6 months! It's so annoying to think that people are just going to trust her without looking things up.

Last edited: